Research Labs and Research Areas

-

- Research Laboratories

- Illustrative Research Areas

- Faculty Research Specialties

- Other Facilities and Resources

- Graduate Student Profiles

Research Laboratories

Organized research laboratories and groups within the area of spatial informatics include:

- Geosensor Networks Lab (GSN Lab)

- Multisensory Interactive Media Lab (MIM Lab)

- Spatial Knowledge and Artificial Intelligence Lab (SKAI Lab)

- Virtual Environments and Multimodal Interaction Laboratory (VEMI Lab)

SCIS is also home to the Spatial Data Science Institute. This collaborative research and scholarly center engages all members of the University of Maine Spatial Informatics faculty, research collaborators across the campus, and cooperating researchers across and among universities nationally and internationally.

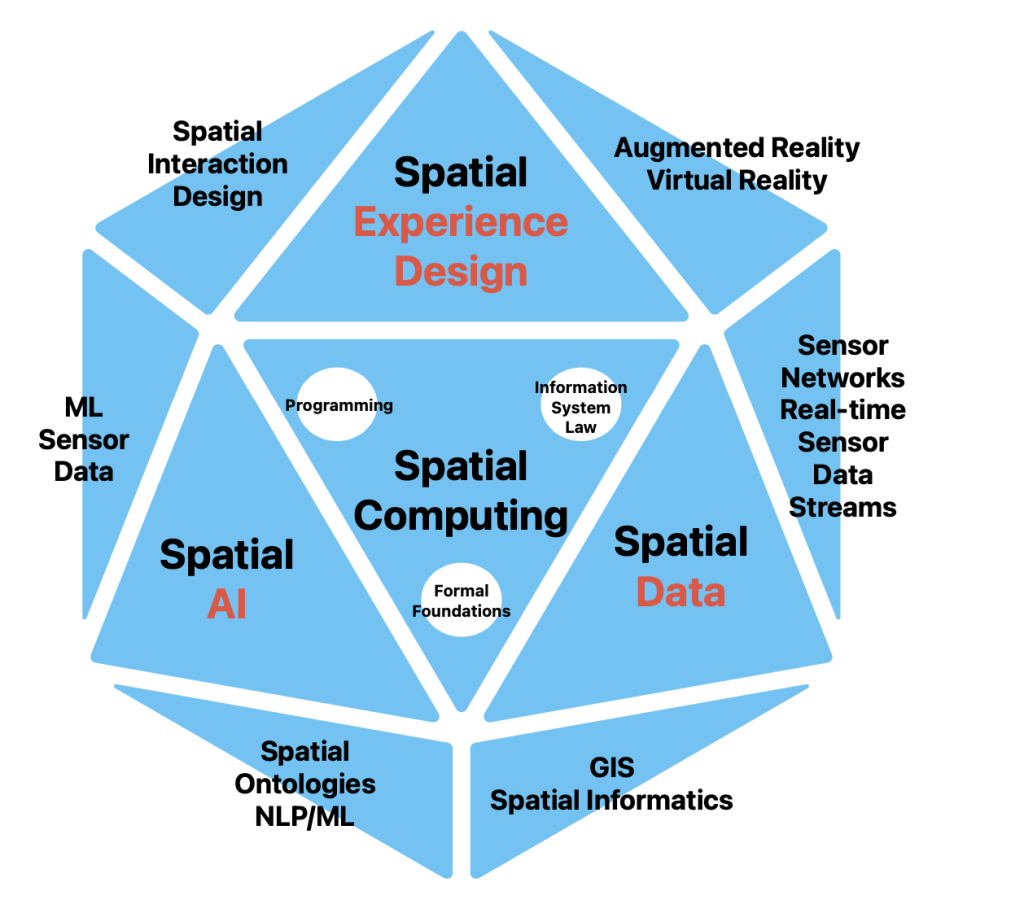

Illustrative Research Areas

Graduate students and faculty are engaged in far ranging yet complementary research programs. Many of the projects involve multi-investigator and cross-disciplinary efforts. The following are illustrative of general areas within which specific topics are being pursued.

- Spatio-Temporal Models: growing amount of data and information have important spatial and temporal dimensions. We need methods to manage, query, and access both dimensions.

Research areas:- Event based spatiotemporal models such as in the Maine E-DNA project

- Generic and domain-specific spatial ontologies

- Geosensor Networks: Networks of small-form sensors with computing platforms and wireless communication streaming live data are deployed in massive numbers in geographical space. Geosensor networks are the next generation environmental platforms that deliver a microscopic view of geographic space. We need advanced methods for building robust, intelligent systems integrate sensing in space and time with understanding events in space and time.

Research areas:- The Field model as foundations integrating for sensor data streams in geographic information systems

- Real-time Processing of Massive Sensor Data Streams for Disaster Response

- In-network data aggregation and spatial query execution in wireless geosensor networks

- Qualitative and quantitative in-network algorithms to detect and incrementally track events

- Mobile geosensor networks

- User Interfaces and Interactions: As devices get smaller and we need to use them in different environments. New interaction paradigms are needed under the differing conditions.

Research areas:- Space-time visualization environments

- Supportive navigation technologies for the visually-impaired

- Mixed Reality (Virtual and Augmented Reality): Despite being a relatively new technology, Mixed Reality is one of the fastest growing markets across the globe. It already disrupts a broad-cross section of industries including education, medicine, retail, as well as entertainment and social lives. However, there are numerous areas to improve.

Research areas:- Multisensory technologies (including touch, smell, and taste)

- Immersion, Presence, and Interaction in VR

- Human sensory perception and multimodal interaction

- Human-Computer Interaction (HCI)

- AI-Based Spatial Knowledge Representation and Information Integration: Growing heterogeneous collections of information (maps, images, text, video, time series) need to be integrated and searched for patterns

Research areas:- Semantic similarity models

- Event data models

- Ontology-based semantic data integration

- Natural Language Processing for automated spatial data extraction from texts

Faculty Research Specialties

Among the key knowledge advancement interests of the faculty include the following:

Dr. Nicholas Giudice: perception, cognitive neuroscience, human factors engineering, neurocognitive engineering, multimodal interaction and spatial cognition

Dr. Torsten Hahmann: spatial informatics, knowledge representation, artificial intelligence, logic, ontologies of space and time, modular and hierarchical ontologies

Dr. Silvia Nittel: real-time sensor data streaming, fields as formal foundation for processing sensor data streams, geosensor networks, decentralized spatial computing, blockchain for sensor data streams, spatial database systems,

Dr. Nimesha Ranasinghe: multisensory interactive media, augmented reality, and human-computer Interaction

See detailed information about the research interests of each of these faculty members at the links above or at Faculty and Staff

Emeriti:

Dr. Kate Beard: geographic information systems, digital libraries, uncertainty in spatial data, information visualization, spatial and temporal analysis

Dr. Max Egenhofer: geographic database systems, spatial reasoning, formalizations of spatial relations; user interface design, spatial query languages

Dr. Harlan Onsrud: information system legal and ethical issues, combined technological and legal approaches in addressing access, security, privacy, and intellectual property issues, STEM + Computing education research